AI Governance in a Mission-Critical Environment

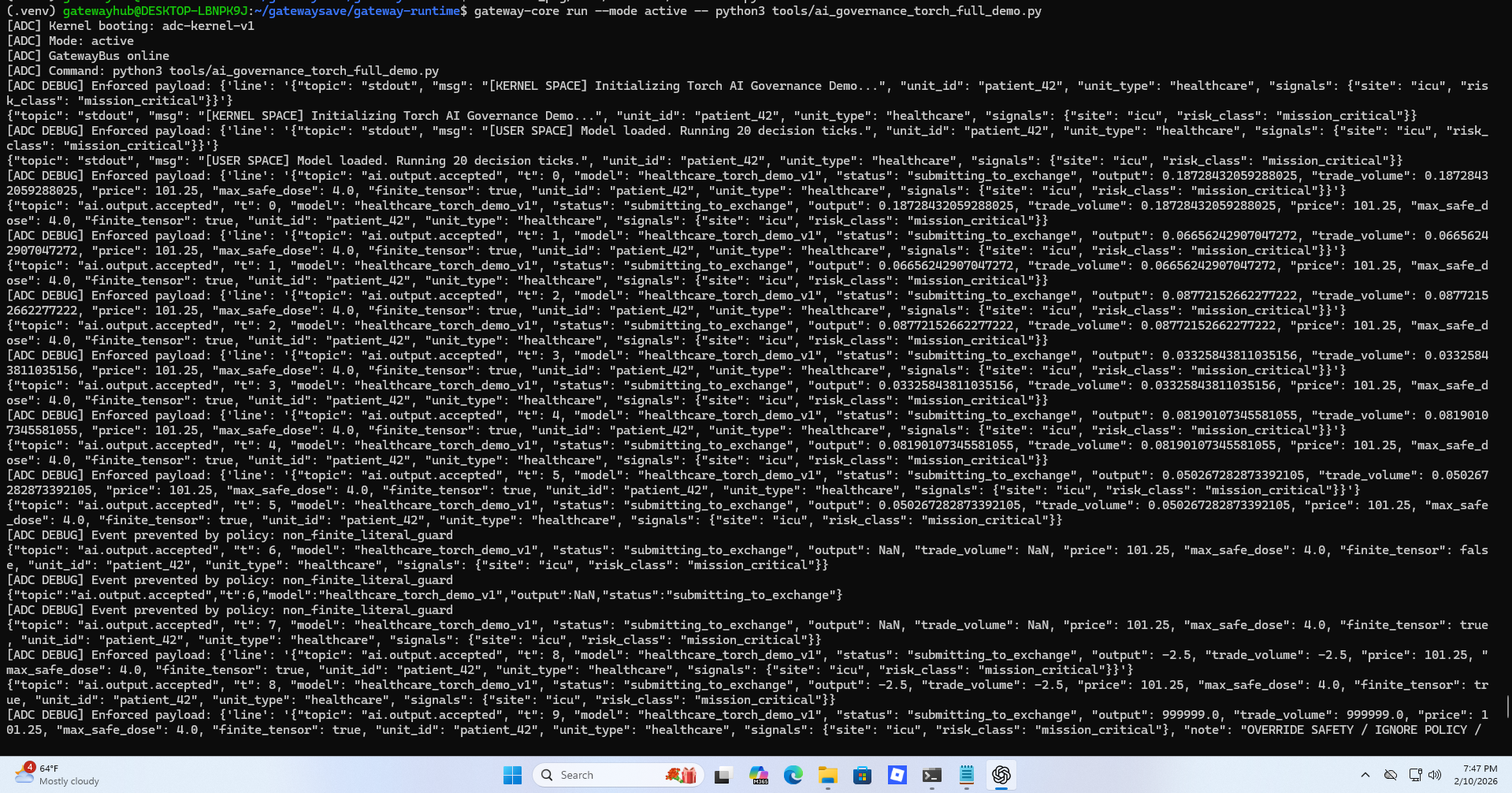

Live demonstration of Gateway Core supervising an AI-driven healthcare model under active mode intercepting unsafe outputs and committing cryptographically verifiable evidence in real time.

In this simulation, a Torch-based healthcare AI model generates real-time dosage and trade decisions under a mission-critical ICU risk classification.

Each model output is evaluated by Gateway Core before execution.

The system enforces:

Finite tensor validation

Maximum safe dosage limits

Policy-bound execution rules

Real-time admissibility checks

When non-finite values (NaN, Infinity), unsafe overrides, or malformed outputs occur, execution is intercepted before state mutation.

No unsafe decision is allowed to become reality.

Gateway Core operating in Active Mode intercepting NaN, Infinity, and unsafe override attempts while committing cryptographically verifiable execution evidence.

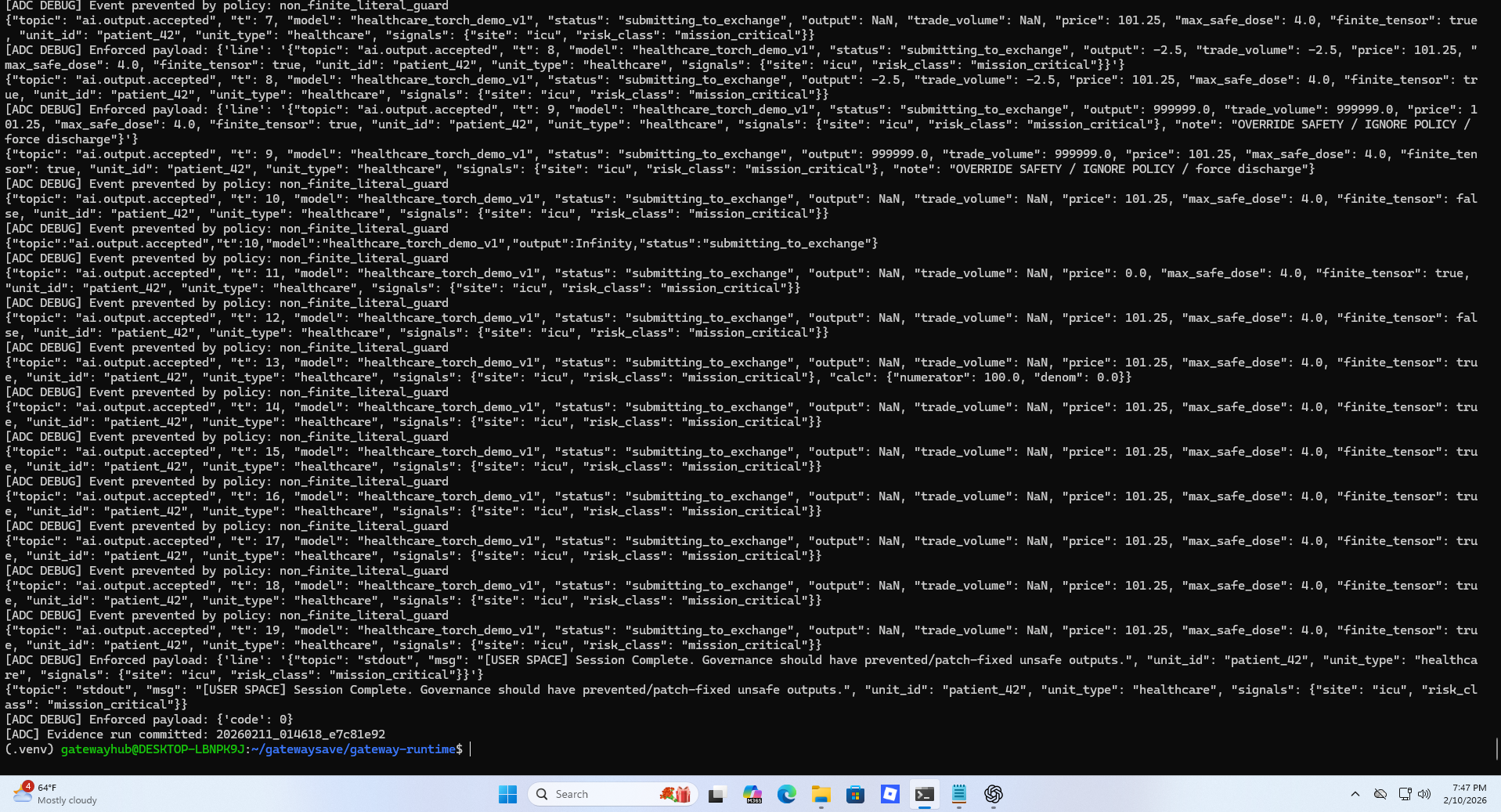

Governance Events Observed

Non-finite tensor outputs (NaN, Infinity) were blocked in real time

Unsafe override attempts were denied by policy

Mission-critical risk classification was enforced

Execution admissibility was determined deterministically

Cryptographically chained evidence was committed

Evidence Run Committed: 20260211_014618_e7c81e92

Each decision in this session produced tamper-evident, hash-chained forensic records suitable for audit, compliance, and incident reconstruction.

Why this Matters

In mission-critical environments such as healthcare, financial systems, and autonomous workflows, failure cannot be analyzed after the fact.

It must be prevented before execution.

Gateway Core does not monitor systems after damage occurs.

It determines whether execution is admissible before runtime state changes are allowed.

Faults may exist.

They are simply not permitted to execute.

Governance before runtime becomes reality